Pocahontas Soundtrack

I have never seen the animated movie Pocahontas, therefore the #2 album this week is particularly meaningless to me. There are some movie soundtracks that have one or two hit songs that make big radio airplay. I do not recognize any of the songs off this album.

I'll make it short and easy: I don't care about this album, and I don't think you should or will, either.

more ...Go Big To Go Home

The first step of data science when working with new a dataset is to understand the high-level facts and relationships within the data. This is often done by exploring the data interactively by using something like Python, R, or Matlab.

Recently I've been exploring a new dataset. It's a pretty big dataset: a few hundred gigabytes of data in compressed Parquet format. A rule of thumb is that reading data off disk into memory takes ten or twenty times the memory than the storage the data uses on disk. For this dataset, that could equal more than ten terabytes of memory, which in 2025 is still a pretty ridiculous amount of memory on a single machine. It is for this reason that working with data this size requires tools that allow you to work with the data without loading all of it into memory at once.

One of these tools is Polars. Quoting the Polars home page: "Want to process large data sets that are bigger than your memory? Our streaming API allows you to process your results efficiently, eliminating the need to keep all data in memory." It's still a bit rough around the edges with some unfinished and missing features, but overall it's a powerful and capable tool for data analysis. Lately I've been using Polars more and more, taking advantage of this "streaming" ability.

On the other hand, sometimes it's easiest to do things directly and skip all the low-memory "streaming" tricks. If I can get an answer more quickly by simply using lots of memory, especially if it's something I'm doing only once and not putting into a repeated process, then this can be the right choice. Polars can do "streaming" analysis, but at a certain point it has to coalesce things into an answer, and sometimes that answer can use a significant amount of memory.

There are many negative aspects of cloud computing that I won't get into here. However, there are some good things, and one of them is that you can scale up and down resources as needed. All cloud providers, like Amazon Web Services and Google Cloud Platform offer many services and in particular Virtual Machines. When running a virtual machine you can choose the hardware specifications in terms of CPU kind and core count, amount of RAM, and other features like network speeds, GPUs, or SSDs. A virtual machine can be booted on one hardware configuration, shut down, and the rebooted on a different configuration as needed. It's as if you took the hard drive out of your laptop and put it in a big workstation. All your data and settings are still there, but you've upgraded the hardware. This is something I take advantage of frequently!

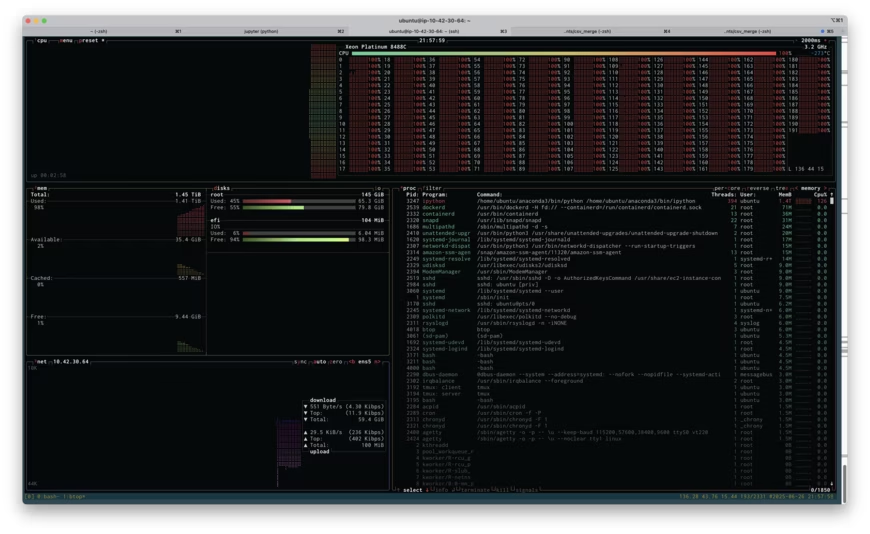

I was attempting to do a certain analysis of the new dataset on an EC2 virtual machine and I kept running out of memory. Instead of switching to some low-memory tricks, I decided to see if I could save some time by simply rebooting my virtual machine on one of the larger instances AWS offers: a r7i.48xlarge. This has 192 CPU cores and 1,536 GB of RAM. It costs $12.70 per hour. I get paid more than $12.70 per hour, so if booting up a huge machine like this saves me even a little time, it's worth it.

Above is a screenshot of btop running on the r7i.48xlarge instance while I attempted to run my analysis. If you click on the image, you'll see the full size screenshot. You'll see that I'm about to run out of memory: 1.41TB used of 1.45TB. You'll also see that I'm using all 192 cores at 100% load (the cores are labeled 0-191). Unfortunately, throwing all this memory at the problem didn't work, I ran out of memory, and I had to resort to being more clever. Being clever took more time, of course, but if the high-RAM instance had worked, it would have paid off.

Playing with various server configuration tools (like this one) shows that the r7i.48xlarge would cost at least $60,000. This is not something that I need very often, and purchasing something this large would be ridiculous. However, renting it for half an hour, if it saves me a few hours, is definitely worth it. Also, it's kind of fun to say "yeah, I used 1.5TB of memory and 192 cores and it wasn't enough."

more ...Pink Floyd - Pulse

I believe that Pulse by Pink Floyd is the first double album I've reviewed so far. Despite having the high price of $34.99 (~$76 in 2025), it debuted at #1.

Pulse is a live album which I often have mixed feelings about. On the one hand, they can have different energy than a studio album, but they can also go awry with bad mixing or extended and aimless modifications to songs. This album is kind of neither of those. I don't think the energy of the songs is improved compared to the studio versions, and there aren't that many changes to the live songs. Pink Floyd was a Progressive Rock band which are known for their extended solos in live performances. This album lacks those for the most part, which I'm grateful for, but lacking changes to the songs removes the point of a live album.

According to the internet's brain, early versions of the CD album included a battery-powered flashing LED. A pulsing LED, one would say.

I don't think that this album amounts to much. If you like Pink Floyd, you might as well check it out, but I wouldn't cancel any other plans you have to listen to it.

more ...Naughty by Nature - Poverty's Paradise

It's been over a month since the last album review, but finally the slow summer of music in 1995 has delivered some new albums in the top 10. This week debuting at #3 is Poverty's Paradise by Naughty by Nature.

Like anyone alive in 1991, my first exposure to Naughty by Nature was their huge hit O.P.P.. The only song I am sure I have heard before off of Poverty's Paradise is Feel Me Flow, which does have a good flow, so I'm happy to feel it.

I don't think about Naughty by Nature much, and my play history confirms that. Before I listened to this album I had only 15 plays since 2018. Listening to this album has reminded me that I like Naughty by Nature's kind of hip-hop, and I should listen to them more when I am in the mood for hip-hop. This is an unusual thing, and is the best outcome of listening to music from 30 years ago.

My recommendation is to check out this album and remind yourself why O.P.P. was such a big hit.

more ...No Album Until June 16th

The late spring/early summer of 1995 must have been a slow period in the music biz because the top ten albums didn't change all that much. Looking through the Billboard top album sales, I won't have a new album to review until June 16th.

In the meantime, it's never the wrong time to listen to some albums that are great but I haven't reviewed. For example, The Downward Spiral by Nine Inch Nails was released over a year ago at #2, but I didn't review it because it was eclipsed by Superunknown by Soundgarden at #1. The Downward Spiral quickly fell out of the top ten so I didn't formally review it. Briefly, it's a great album, go listen to it!

Friday Soundtrack

I'm not going to belabor this. This week's album at #2 is the soundtrack for the 1995 buddy comedy Friday. I have not seen the movie, but the consensus seems that it wasn't horrible. The soundtrack is nothing special. Apparently the track Keep Their Heads Ringin' was a hit although I don't remember it.

You can skip this album.

more ...White Zombie - Astro Creep: 2000 Songs Of Love, Destruction And Other Synthetic Delusions Of The Electric Head

The only song I remember hearing off the #6 album this week is More Human Than Human. I don't think I've heard it in a long, long time. After listening to the entirety of Astro Creep: 2000 Songs Of Love, Destruction And Other Synthetic Delusions Of The Electric Head, I am not disappointed that my exposure to White Zombie has not been comprehensive. I did not like this album much and I can't recommend it.

more ...Polars scan_csv and sink_parquet

The documentation for polars is not the best,

and figuring out how to do this below took me over an hour.

Here's how to read in a headerless csv file into a

LazyFrame using

scan_csv

and write it to a parquet file using

sink_parquet.

The key is to use with_column_names and

schema_overrides.

Despite what the documentation says, using schema doesn't work as you

might imagine and sink_parquet returns with a cryptic error about

the dataframe missing column a.

This is just a simplified version of what I actually am trying to do, but that's the best way to drill down to the issue. Maybe the search engines will find this and save someone else an hour of frustration.

import numpy as np

import polars as pl

df = pl.DataFrame(

{"a": [str(i) for i in np.arange(10)], "b": np.random.random(10)},

)

df.write_csv("/tmp/stuff.csv", include_header=False)

lf = pl.scan_csv(

"/tmp/stuff.csv",

has_header=False,

schema_overrides={

"a": pl.String,

"b": pl.Float64,

},

with_column_names=lambda _: ["a", "b"],

)

lf.sink_parquet("/tmp/stuff.parquet")

John Michael Montgomery - John Michael Montgomery

When I saw the #6 album for this week, I said "I've never heard of John Michael Montgomery," but that's not true. Last year I reviewed All-4-One which included a cover of I Swear that was written and originally performed by John Michael Montgomery. I mentioned this in my review, but 10 months later his name did not remain embedded in my memory.

I Swear isn't on this eponymous album, but I Can Love You Like That is, which was also covered by All-4-One to great success. Playing this album I Can Love You Like That seemed familiar, but I'm not sure if it was the Country or R&B version I have heard before. All-4-One has many thanks to give John Michael Montgomery!

Outside of the All-4-One/John Michael Montgomery connection, I find nothing interesting about this album. It's not bad, but it's nothing special. The first line of the first song includes the words "I drive a pickup truck." This album was not trying to break new ground. If you're into this kind of Country music, I'm sure it delivers. I'm not, so it doesn't for me, and I can't recommend this album.

more ...Ol' Dirty Bastard - Return to the 36 Chambers: The Dirty Version

There were no new albums in the top ten last week, but this week another new hip-hop album debuts in the top ten, this time at #7. Return to the 36 Chambers: The Dirty Version by Ol' Dirty Bastard was his debut solo record. He was a member of Wu-Tang Clan from its founding until his death in 2004.

This review is basically a repeat of my last review. Like 2Pac, I was never into Ol' Dirty Bastard (nor Wu-Tang), and listening to this album hasn't changed my mind thirty years later. I acknowledge that Wu-Tang and Ol' Dirty Bastard are important to the history of hip-hop, and that alone means they're worth listening to at least once, but I'm just not into them.

more ...Claude Code

A few weeks ago I started playing with Claude Code, which is an AI tool that can help build software projects for/with you. Like ChatGPT, you interact with the AI conversationally, using whole sentences. You can tell it what programming language and which software packages to use, and what you want the new program to do.

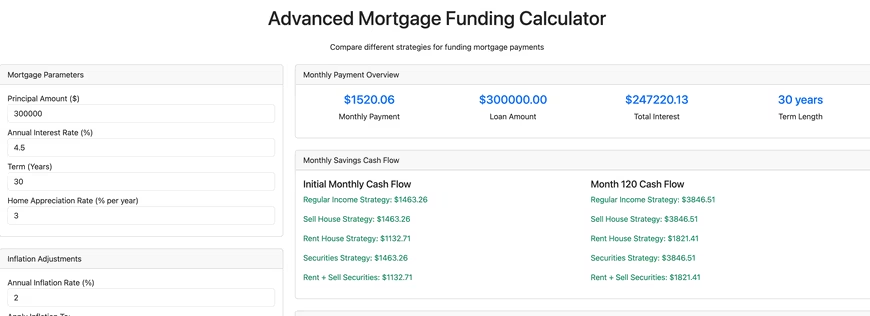

I started by asking it to build a mortgage calculator using Python and Dash. Python is one of the most popular programming languages and Dash is an open source Python package that combines Flask, a tool to build websites using Python, and Plotly, a tool that builds high-quality interactive web plots. The killer feature of Dash is that it handles all the nasty and tedious parts of an interactive webpage (meaning Javascript, eeeek!). It deals with webpage button clicks and form submissions for you, and you, the coder, can write things in lovely Python.

With a fair amount of back and forth Claude Code built this advanced mortgage calculator, which is only sorta kinda functional. It does a fair amount of what I asked it to do, but it also doesn't do a fair amount of what I asked it to do, and it has a decent number of bugs. The good things it did was handle some of the tedious boiler plate stuff like creating the necessary directory hierarchy and files, including a README.md detailing how to run the software. Creating a Dash webpage requires writing Python function(s) and a template detailing how to insert the output of the function(s) into a webpage, and Claude Code handled that with aplomb.

What it didn't handle well was more complicated things, like asking it to write an optimizer for funding/paying off a mortgage considering various funding sources and economic factors. It also didn't write the code using standard Python practices. The first time I looked at the code using VS Code and Ruff, Ruff reported over 1,000 style violations. If I found a bug in the code, some of the time I could tell Claude to fix it, and it would, but other times, it would simply fail. Altogether there's about 6,000 lines of code, and as the project got bigger it was clear that Claude was struggling. Simply put, there's a limit of the size or complexity of a codebase that Claude can handle. Humans are not going to be replaced, yet.

At this point I'm not sure what I'll do with the financial calculator. I don't think Claude can help me any more, so I'd have to manually dive in to fix bugs and improve it, and I haven't decided if I will. In summary, my impression of Claude is that it's decent at creating the base of a project or application, but then anything truly creative and complicated is beyond what it can do.

Claude Code isn't free (they give you $5 to start) and I had to deposit money to make this tool. I still have a bit of money to spend, so let's try a few tasks and see how Claude does. I've uploaded all of the code generated below to this repository.

Simple Calculator

I asked it to create a simple web-based calculator, and at a cost of $0.17, here is what it created that works well (that's not a picture below, try clicking on the buttons!).

Simple C Hello World!

Create a template for a program in C. Include a makefile and a main.c file with a main function that prints a simple "hello world!". $0.08 later it performed this simple task flawlessly.

Excel Telescope Angular Resolution

Create an Excel file that can calculate the angular resolution of a reflector telescope as a function of A) the diameter of the primary mirror and B) the altitude of the telescope. It went off and thought for a bit, and spent another $0.17 of my money. It output a file with a ".xlsx" extension, but the file can't be opened. Looking at the output of Claude, I suspect that this may be a file formatting issue because Claude is designed to handle text file output rather than binary.

Text to Speech

Seeing that Claude struggled with creating binary output, next I asked it to create a Jupyter notebook (Jupyter notebooks have the extension .ipynb but they're actually text files) that uses Python and any required packages and can take a block of text and use text to speech to output the text to a sound file. This succeeded ($0.12), and in particular used gTTS (Google Text To Speech) to do the heavy lifting.

Rust Parallel Pi Calculator

Write a program in Rust that uses Monte Carlo techniques to calculate pi. Use multithreading. The input to the program should be the desired number of digits of pi, and the output is pi to that many digits. $0.11 later, I got a Rust program that crashes with a "attempt to multiply with overflow" error. Not great! I could interact with Claude and ask it to try to fix the error, but I haven't.

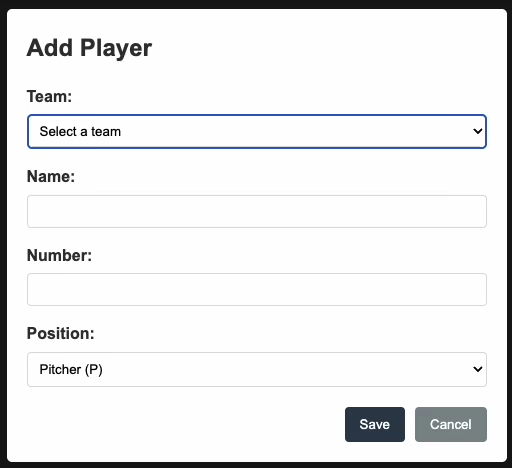

Baseball Scoresheet Web App

Create a web app for keeping score of a baseball game. Make the page resemble a baseball scoresheet. Use a SQLite database file to store the data. Make the page responsive such that each scoring action is saved immediately to the database. At a cost of $0.93, it output almost 2,000 lines of code. The resulting npm web app doesn't work. Upon initial page load it asks the user to enter player names, numbers, and positions, but no matter what you do, you cannot get past that. Bummer!

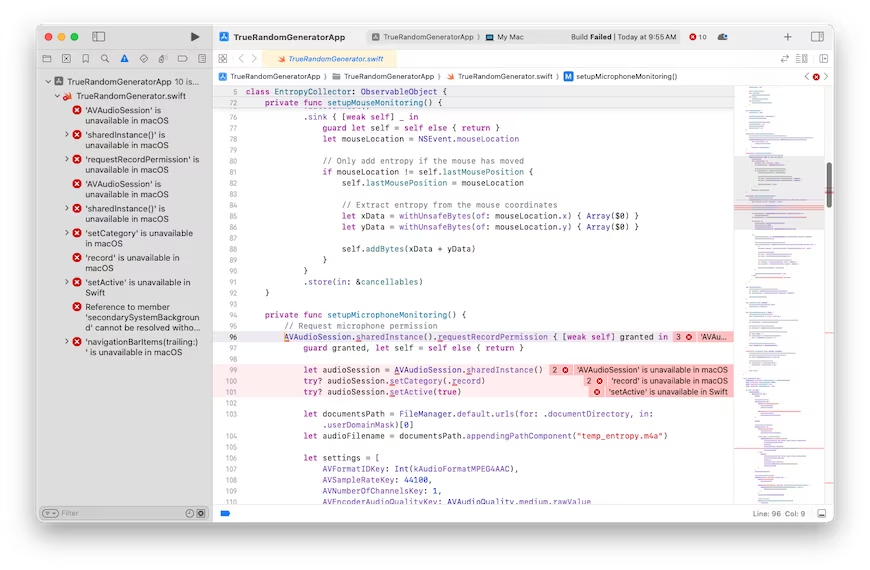

Random Number Generator

It looks like the more complicated the ask gets, the worse Claude gets. Here's one more thing I'll try that I have no idea how to do myself. We'll see how well Claude does at it. Create a Mac OS program that generates a truly random floating point number. It should not use a pseudo-random number generator. It should capture random input from the user as the source of randomness. It should give the user the option of typing random keyboard keys, or mouse movements, or making noises captured by the microphone. Please use the swift programming language. Create a Xcode compatible development stack. Create a stylish GUI that looks like a first-class Mac OS program. $0.81 later, and asking it to fix one bug, led to a second try that was also broken with 10 bugs. Clearly, I've pushed Claude past its breaking point.

I'm guessing that all of the broken code can be fixed, maybe by Claude itself, but it ultimately might require human intervention in some cases. I'm optimistic that it could fix the Rust/pi bug, but I'm not optimistic that it can fix the baseball nor random number generator stuff. AI code generation might be coming for us eventually, but not today.

more ...2Pac - Me Against the World

There were no new albums in the top ten last week, but this week a new entrant rockets to #1. I was never that into 2Pac, and listening to this album this week hasn't changed my mind. I acknowledge that he was one of the best at his craft, and I like some of his contemporaries in hip-hop, but for whatever reason 2Pac just doesn't do it for me.

Considering his historical importance it's probably worth your time familiarizing yourself with 2Pac, but I personally don't endorse his music.

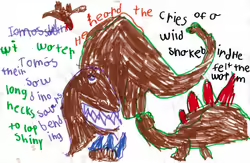

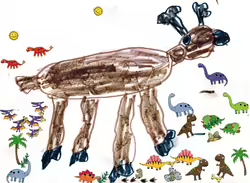

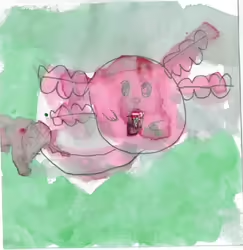

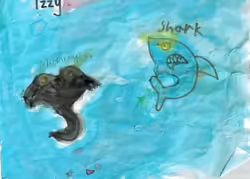

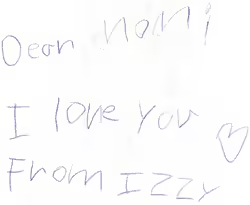

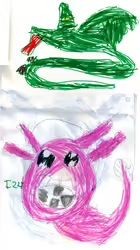

more ...Kid Arts

Here's a bunch of various kid art for March 2025. It appears that a few browsers on MacOS, including Chrome, Opera, and Vivaldi, have issues with the black and white avif images below. Firefox and Safari appear to work just fine. Enjoy!

more ...

more ...

Bruce Springsteen - Greatest Hits

Last week there was no new album in the top ten, but this week a new arrival shot straight to the top. Released in 1995, this was the first "Greatest Hits" album by Bruce Springsteen. In the thirty years since quite a few more have been released.

Springsteen certainly wasn't at peak popularity in 1995, his most recent albums released in 1992 (Human Touch and Lucky Town) didn't match the success of his earlier work. His contribution of the song Streets of Philadelphia to the film of the same name in 1993 definitely helped keep him relevant. A decade removed from his best and most popular album was probably a good time to revisit his twenty years of music. Many critics dislike compilation/best of albums, but they are obviously very popular with consumers.

Springsteen is currently my #1 most listened to artist on last.fm. I am obviously biased in favor of his music. My favorite Springsteen compilation album is probably The Promise, but this first Greatest Hits album is a good collection of the best of his first two decades of songs.

Thinking back I wasn't into Springsteen in 1995 as much as I am now. When I was a teenager his kind of rock music wasn't in fashion and it took me until the wisdom of adulthood to discover it.

I don't necessarily recommend this exact compilation of Springsteen, but I do recommend listening to his library. A more recent Greatest Hits from 2024 is another good choice, especially in the 31 song digital version. You should check it out!

more ...Live - Throwing Copper

According to the chart, Throwing Copper by Live had been out for almost a year (43 weeks) before it cracked the top ten. The good news for Live was that their trajectory continued upwards all the way to the top. According to wikipedia the album took 52 weeks to reach #1 in May, 1995.

I seem to remember some rumblings when this album was popular that Live was secretly a Christian rock band that was crossing over to the mainstream, and that some of the songs like Lightning Crashes were anti-abortion songs. Reading the wikipedia page for Lightning Crashes, it seems that some of that confusion might have come from the music video. In truth the song was dedicated to a high school friend of the lead singer who was killed by a drunk driver.

My opinion of Live has perhaps been tainted by these rumblings and I never got into deeply their music. My listening count for Live is small and a few of their contemporaries have quite a bit more plays from me, like Collective Soul, Bush, and Counting Crows. Listening now I think the music is just a bit too corny and just a bit over the top for me.

My recommendation is that Throwing Copper is worth a stream, but it's not spectacular. It is very 90s, and it is fun to re-discover some of Live's music from thirty years ago.

more ...