Anita Baker - Rhythm of Love

Sitting at #3, Rhythm of Love by Anita Baker made zero impression on me. Nothing negative, nothing positive, nothing. That's all I really have to say about it.

more ...Eric Clapton - From The Cradle

Debuting at #1, From the Cradle by Eric Clapton is 100% blues. This album was released on the heels of "Tears in Heaven" which likely contributed to the popularity of this album. According to this fan ranking site it's one of his best albums. While other people may find it great, it doesn't make a big impression on me. For me, Slowhand and Unplugged are much more interesting. Certainly, From the Cradle is well done, but it just doesn't make me feel the things some of his other work does.

My recommendation is to check it out, you may like it, or not.

more ...The Three Tenors In Concert 1994

It takes a rare thing for an album by three(*) opera singers to make the top ten in album sales. It takes a cultural moment where an opera singer, or singers, is widely enough known to garner the kind of attention required to sell enough albums to make the top ten. It also takes a certain kind of album; a whole opera would not be able to sell as well as a "greatest hits" collection, which is what this album is. This week, The Three Tenors in Concert 1994 is in the seventh position on the charts. It looks like this album will rise to as high as fourth in sales, which in 1994 is a fair number of copies sold.

Curiously, the only streaming services that appear to have rights to this album are YouTube (and its eponymous music service), and SoundCloud. Notably Spotify and Tidal do not have it. Luckily I have access to YouTube Music so I listened to it there.

As mentioned above, this album is mix of "greatest hits" of opera and popular music. I am not a big opera afficionado, but even I can tell that these tenors are far more comfortable singing opera than popular songs. The opera songs are clearly very well sung. Unfortunately, the popular songs, like My Way, are halting and impacted by the fact that english is not these singers first language. I think that if they had stuck to singing only opera's greatest hits, it would have made the album better at the expense of its popularity. But as it is, the popular songs are not good. If you care to, listen to Sinatra's My Way, and then My Way off this album to hear what I mean.

Honestly, it appears that even the rights holders to this album are content to let this album fade into history. Streaming services are where the listeners are, and by keeping it off of most services, they are making it almost impossible to find. If I didn't have access to YouTube Music, I would not have put in the effort to listen to it. I think that you, dear reader, can safely leave this album in the past.

(*) There are four men on the album cover. I guess the fourth is the conductor of the orchestra. Still, it's incongruous to have "Three Tenors" and four people in the picture.

more ...Boyz II Men - II

Shooting to #1 in its first week on the charts, II by Boyz II Men arrived a bit too late for my (pre-)teenaged angst, which I feel peaked during middle school. During middle school the Boyz II Men hit End of the Road was huge and listening to it now evokes memories of awkward middle school feelings. By the time I got to high school I think my angst (which was probably lower than many of my peers) was already subsiding. I think because of this, II and the songs on it, don't quite have the same impact as those off of Boyz II Men's first album Cooleyhighharmony.

There are two huge hits off of II, I'll Make Love To You and On Bended Knee, which me and most of my readers have surely heard before and continue to get radio airplay.

Boyz II Men are squarely in the R&B genre, and while I like R&B, it's not my main go-to. If I were to listen to some Boyz II Men, I would probably listen to Cooleyhighharmony before listening to II. II is high quality 90's R&B with record-breaking hit songs, but for me it just doesn't hit the same.

more ...The Offspring - Smash

It was over 20 years ago, but if my memory is correct, I've seen The Offspring in concert twice. Needless to say, Smash is one of my favorite albums. On my streaming service (which is Tidal at the moment) it was already added to my list of favorite albums long before I wrote this review. According to last.fm, I've listened to this album almost 350 times.

This album is their breakthrough album into worldwide popularity. The song Come Out and Play reached #1 on the charts and received extensive radio airplay. It's likely, therefore, that I heard that song and the other big hit off the album, Self Esteem, on the radio when this album came out. However, I admit that I didn't really get into the band until I was in college.

I recommend this album very highly, especially if you're in the mood for some high energy punk rock. I recommend memorizing the string of curses in the bridge of Bad Habit; it's useful when you want to let off some steam.

more ...Setting Hadoop Node Types on AWS EMR

Amazon Web Services (AWS) offers dozens (if not over one hundred) different services. I probably use about a dozen of them regularly, including Elastic Map Reduce (EMR) which is their platform for running big-data things like Hadoop and Spark.

EMR runs your work on EC2 instances, and you can pick which kind(s) you want when the job starts. You can also pick the "lifecycle" of these instances. This means you can pick some instances to run as "on-demand" where the instance is yours (barring a hardware failure), and other instances to run as "spot" which costs much less than on-demand but AWS can take away the instance at any time.

Luckily, Hadoop and Spark are designed to work on unreliable hardware, and if previously done work is unavailable (e.g. because the instance that did it is no longer running), Hadoop and Spark can re-run that work again. This means that you can use a mix of on-demand and spot instances that, as long as AWS doesn't take away too many spot instances, will run the job for lower cost than otherwise.

A big issue with running Hadoop on spot instances is that multi-stage Hadoop jobs save some data between stages that can't be redone. This data is stored in HDFS, which is where Hadoop stores (semi)permanent data. Because we don't want this data going away, we need to run HDFS on on-demand instances, and not run it on spot instances. Hadoop handles this by having two kind of "worker" instances: "CORE" instances that run HDFS and have the important data, and "TASK" types that do not run HDFS and store easily reproduced data. Both types share in the computational workload, the difference is what kind of data is allowed to be stored on them. It makes sense, then, to confine "TASK" instances to spot nodes.

The trick is to configure Hadoop such that the instances themselves know what kind of instance they are. Figuring this out was harder than it should have been because AWS EMR doesn't auto-configure nodes to work this way; the user needs to configure the job to do this including running scripts on the instances themselves.

I like to run my Hadoop jobs using mrjob which makes development and running Hadoop with Python easier. I assume that this can be done outside of mrjob, but its up to the reader to figure out how to do that.

There are three parts to this. The first two are two Python scripts that are run on the EC2 instances, and the third is modifying the mrjob configuration file. The Python scripts should be uploaded to a S3 bucket because they will be downloaded to each Hadoop node (see the bootstrap actions below).

With the changes below, you should be able to run a multi-step Hadoop job on AWS EMR using spot nodes and not lose any intermediate work. Good luck!

make_node_labels.py

This script tells yarn what kind of instance types are available. This only needs to run once.

#!/usr/bin/python3

import subprocess

import time

def run(cmd):

proc = subprocess.Popen(cmd,

stdout = subprocess.PIPE,

stderr = subprocess.PIPE,

)

stdout, stderr = proc.communicate()

return proc.returncode, stdout, stderr

if __name__ == '__main__':

# Wait for the yarn stuff to be installed

code, out, err = run(['which', 'yarn'])

while code == 1:

time.sleep(5)

code, out, err = run(['which', 'yarn'])

# Now we wait for things to be configured

time.sleep(60)

# Now set the node label types

code, out, err = run(["yarn",

"rmadmin",

"-addToClusterNodeLabels",

'"CORE(exclusive=false),TASK(exclusive=false)"'])

get_node_label.py

This script tells Hadoop what kind of instance this is. It is called by Hadoop and run as many times as needed.

#!/usr/bin/python3

import json

k='/mnt/var/lib/info/extraInstanceData.json'

with open(k) as f:

response = json.load(f)

print("NODE_PARTITION:", response['instanceRole'].upper())

mrjob.conf

This is not a complete mrjob configuration file. It shows the essential parts needed for setting up CORE/TASK nodes. You will need to fill in the rest for your specific situation.

runners:

emr:

instance_fleets:

- InstanceFleetType: MASTER

TargetOnDemandCapacity: 1

InstanceTypeConfigs:

- InstanceType: (smallish instance type)

WeightedCapacity: 1

- InstanceFleetType: CORE

# Some nodes are launched on-demand which prevents the whole job from

# dying if spot nodes are yanked

TargetOnDemandCapacity: NNN (count of on-demand cores)

InstanceTypeConfigs:

- InstanceType: (bigger instance type)

BidPriceAsPercentageOfOnDemandPrice: 100

WeightedCapacity: (core count per instance)

- InstanceFleetType: TASK

# TASK means no HDFS is stored so loss of a node won't lose data

# that can't be recovered relatively easily

TargetOnDemandCapacity: 0

TargetSpotCapacity: MMM (count of spot cores)

LaunchSpecifications:

SpotSpecification:

TimeoutDurationMinutes: 60

TimeoutAction: SWITCH_TO_ON_DEMAND

InstanceTypeConfigs:

- InstanceType: (bigger instance type)

BidPriceAsPercentageOfOnDemandPrice: 100

WeightedCapacity: (core count per instance)

- InstanceType: (alternative instance type)

BidPriceAsPercentageOfOnDemandPrice: 100

WeightedCapacity: (core count per instance)

bootstrap:

# Download the Python scripts to the instance

- /usr/bin/aws s3 cp s3://bucket/get_node_label.py /home/hadoop/

- chmod a+x /home/hadoop/get_node_label.py

- /usr/bin/aws s3 cp s3://bucket/make_node_labels.py /home/hadoop/

- chmod a+x /home/hadoop/make_node_labels.py

# nohup runs this until it quits on its own

- nohup /home/hadoop/make_node_labels.py &

emr_configurations:

- Classification: yarn-site

Properties:

yarn.node-labels.enabled: true

yarn.node-labels.am.default-node-label-expression: CORE

yarn.node-labels.configuration-type: distributed

yarn.nodemanager.node-labels.provider: script

yarn.nodemanager.node-labels.provider.script.path: /home/hadoop/get_node_label.py

Neil Young - Sleeps With Angels

Hitting #9, Sleeps with Angels by Neil Young and Crazy Horse is mostly unremarkable. I am pretty sure I had never heard any of the songs on this album before listening to it for this project. I like some of Neil Young's earlier albums and some of them are legitimately all time greats. This album is not, and surely hit #9 only on the strength of Neil Young's past work.

The one track I will remark upon is the 11th song on the album, Piece of Crap. This song is Neil Young complaining about things he purchased that broke almost immediately. What is it with middle-aged male rock stars writing songs like this? We've previously seen this with Jimmy Buffet. No one cares about your opinion!

In summary, if you want some Neil Young, try some of his better albums and ignore this one.

more ...Green Day - Dookie

Last week's chart had no new albums in the top 10, so I did not review anything new.

Despite being released for six months, Dookie by Green Day took a while to reach the top ten in the charts. My memory is a bit hazy, but I feel like I became aware of Green Day and this album roughly around this time 30 years ago when I was a beginning 9th grader in high school. At the time it was incredibly cool that this album was made by a band from my home town of Berkeley. It was recorded in Berkeley and the cover art (click here for a high resolution version) had many Berkeley and East Bay landmarks:

- A BART train

- The Chevron Richmond refinery and oil tank farm

- UC Berkeley's Sather Tower (a.k.a. The Campanile)

- The ASUC Student Union building

- A few London Plane trees

- The East Bay hills behind campus

Dookie is probably one of my favorite albums. At the time of writing I've listened to tracks off the album over 500 times. This album definitely has the ability to transport me back to my youth when feelings were bigger and responsibilities were fewer. This album should be in your collection to listen to whenever you need a bit of punk in your day.

more ...Candlebox - Candlebox

Squeaking in at #10 is Candlebox by Candlebox. They were formed in Seattle around the same time as much bigger groups, such as Nirvana, Alice in Chains, and Pearl Jam. This album did quite well, but they never reached the same heights on subsequent albums.

I was never that much into Candlebox. I suppose I heard a few of the songs off this album on the radio, but it never made a big impression on me. 30 years later listening to them again my opinion hasn't changed. It's still not that exciting to me. I'll probably never listen to this album again.

more ...Forrest Gump Soundtrack

Rising to the #3 position, the Forrest Gump Soundtrack is made up of, except for the Forrest Gump Suite, entirely non-original songs. The movie covers 30 years of Forrest Gump's life, from 1951 to 1981, and the songs were chosen to evoke different periods of American history that compliment the on-screen action.

If you click on any of the various online music providers linked at the top of this post, you'll find that none of the links go to full and complete albums. For likely dumb licensing reasons the streaming albums are missing songs. It's dumb because most, if not all, the songs are already available on the streaming platforms, but just in other albums. For example, here is a playlist a user on Tidal made that looks to have all the songs in the movie (although the order looks to be a bit off). Furthermore, you can still buy the soundtrack on physical media (*) which indicates that the licensing agreements are still active.

I have seen the movie and I find it entertaining, and in particular, I think the music helps frame the different periods in American history Forrest Gump experiences quite well.

The album itself is a good collection of American music spanning the 30 year time frame of the movie. Most are genuine hits and there's no filler or pointless covers unlike other soundtracks I've reviewed before. Of all the soundtracks I've reviewed so far, this is by far the best. The only proviso to the album is that on its own, e.g. if the movie never existed, because the songs cover such a wide range of Americana, they are a bit scattered thematically. But as a way to recall the movie, if you want to do that, it works well.

(*) At the time of this writing, you can buy a cassette tape version for nearly $90! Compared to the $14.48 CD version, which is better in nearly every respect, that's quite a premium.

more ...The Rolling Stones - Voodoo Lounge

Last week there was no un-reviewed album in the top 10. This week we jump to the #2 album, which is new to the list this week: Voodoo Lounge by the Rolling Stones. I don't think I need to introduce the Rolling Stones at all. They are one of the most successful and popular rock bands of the last sixty years. If you don't know who the Stones are, I don't know what you're doing on the internet and reading my website. How? Just, how?

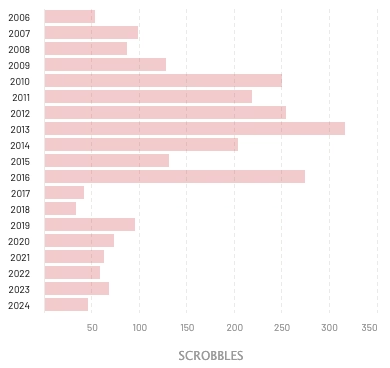

By count, the Stones are in the top ten of my listening history over the last 18 years. Below is a histogram of my listens broken down by year. It looks like the last few years I've somewhat reduced my rates, but I think this has more to do with my focus on trying to find new music I like rather than disliking older music. I definitely still like the Stones and I will still continue to listen to them.

Voodoo Lounge is not the best Stones album, but it's not bad. The first two songs, Love Is Strong and You Got Me Rocking each get a fair amount of radio airplay. Both are on the compilation Forty Licks, which I'm pretty sure I purchased in CD form around the time of release in 2002. Voodoo Lounge itself is just fine, and it's worth a listen. The Stones have many truly great albums that you should definitely check out.

more ...Alan Jackson - Who I Am

Hitting #7 on its first week on the charts, Who I Am by Alan Jackson is pretty standard mid-90s country.

This kind of country has never been "my jam," and this album has not changed my opinion. The songs on this album are not awful, not great, but again, not for me. A few of the songs off this album are on Alan Jackson's 34 Number Ones album. So, yeah, nothing special.

In summary, this album gets a big ol' "meh" from me.

more ...The Lion King Soundtrack

Peaking at #2 this week is the soundtrack for the animated movie The Lion King.

I'm pretty sure I did not see this movie in the theaters when it came out. I was a 14 year old and animated children's movies were too uncool for me (not that I was a very cool 14 year old, to be honest). As it happens, just recently I took my two children (tell that to my 14 year old self) to the re-release of The Lion King in theaters, which I think was the first time I ever watched the movie from beginning to end. I already had a pretty good idea of the arc of the story, both because of the films popularity, and also because it's based on Hamlet. Similarly, I have never watched Titanic, but because of its popularity I am pretty sure I know all the various plot points (also, the boat sinks).

The Lion King is a good movie that at only 88 minutes is shockingly short when compared to modern movies (the live-action The Little Mermaid is 135 minutes long!). The star-studded cast of this movie basically guaranteed the public's interest, and the execution of the plot, characters, and music propelled it to one of the biggest box office hits ever.

Watching the 30 year old film in the theaters it struck me how computerized animation has changed animated movies. The Lion King was hand-drawn, meaning that each cell had a limited amount of complexity. It's not practical to have to redraw complicated scenes hundreds or thousands of times, each at slightly different angles. When I look at modern movies, animators can design and place complicated 3D objects in the digital world just once, and the computer handles rendering that object for all frames. Obviously, this allows for much richer and detailed animated worlds, but there's something to be said for the restrictions that hand animation puts on movie makers — they need to find ways to make a visually meaningful frame some other way.

As a soundtrack, this album is fine. There are some catchy songs and they're fun to listen to outside of watching a movie. Soundtracks are always a bit jumbled because part of a movie soundtrack is to compliment what's on the screen, and that's obviously missing on a soundtrack. The Elton John versions at the end of the album are decent, he's good at what he does.

My final word is that the movie is worth seeing, and the soundtrack exists if you want it, but it's not essential.

more ...Tour de France Pool 2024

Here is the 2024 page for the Tour Pool I've been a part of for 17 years. I'm on team Florky which are in second to last place as I write this. Which is pretty typical.

more ...